Two days ago I asked my home-built AI assistant a question about itself.

The question was: "I am getting Brock plist drift warnings in HA. Investigate." Brock — that's the assistant — replied with a confident, well-formatted, plausible-sounding answer about how the warnings were "very likely related to a common and serious data corruption issue in Home Assistant involving the use of empty templates feeding utility meters." It went on to explain that the root cause was "the dangerous use of | float(0) in Jinja2 templates that feed a utility_meter set to state_class: total_increasing."

This was completely made up. None of those words have anything to do with what plist drift actually is. Plist drift is detected by a shell script in my own home directory that I wrote — ~/.brock/watchdog/brock_watchdog.sh, Check #9 — which compares the SHA-256 of every macOS LaunchAgent property list file against a canonical snapshot, and alerts when the live copy diverges. There is no utility_meter, no Jinja, no float(0). The model had pattern-matched on the words "drift" and "HA" and confabulated a plausible-sounding story about Home Assistant utility meters.

The answer wasn't wrong because the model is dumb. It was wrong because of an architectural gap on my side: the assistant had no grounded context for "Brock's own infrastructure" as a topic. None of my dozen specialists pulled the actual brock_watchdog.sh source into the prompt. None had a tool that exposed the live alert log. So when faced with a plausible-sounding-but-novel question shape, the model did what models do without grounding: it filled in the gap with text that read like an answer.

I've been building Brock — and a paper-trading sibling called Stockton — for about eighteen months in my home lab. The whole project family is named after Cardinals, which I'll defend to anybody who asks: every specialist needs a position, every position needs a player, and you don't put your shortstop in left field just because they have a glove. Almost everything I've learned about agent reliability has come from incidents like the plist drift one. Not from a single revelation. From accumulating debugging sessions that gradually clarified what an "agent" actually has to be in production, versus what it can pretend to be in a demo. This post is the shape of that accumulated learning, told through the specific moves I made to get to where I am now.

Why I'm running an AI at home

Before any of the architecture matters, the boring practical answer to "why build your own?" matters. I'm running an agent at home because the wall I kept hitting with off-the-shelf options had a specific shape, and I want to describe that shape because it tells you whether you should be building your own or not.

I have three solar inverters in the garage that talk Modbus. They have writable registers — many of them dangerous. Setting the wrong register at the wrong time can damage the battery bank, violate the interconnection agreement with my utility, or push current backward through the meter in ways that aren't legal where I live. I want an agent that can adjust charge schedules during the super-off-peak window from 10 p.m. to 6 a.m., when grid power is one quarter the price it is in the late afternoon. I do not want an agent that can adjust anything else.

I have a paper-trading account that runs four strategies — cash-secured puts, defined-risk credit spreads, momentum equity rotation, and long calls — that I want to monitor and occasionally manually intervene in via Telegram slash commands while I'm on the couch. The trades are paper, but the data feeding them isn't: it's Alpaca account state, broker positions, drawdown calculations, Black-Scholes greeks. I do not want my real-money strategy logic, even when paper, sent to a third-party LLM provider's training pipeline.

I have a Home Assistant install with about two thousand entities tracking weather, energy, batteries, lights, locks, vehicles, gardens. The data is intimate — it's where my family is, what we're doing, when we're home. I do not want it sent to a third-party cloud either.

These three things — inverter safety, financial data sovereignty, and home telemetry sensitivity — are the wall. If your use case doesn't have a wall like this, you should probably use a managed agent platform and skip the rest of this post. I'd be using one too if I could. The reason I'm building my own is that paying a vendor doesn't get me what I need.

Six things I built that I didn't know I'd need

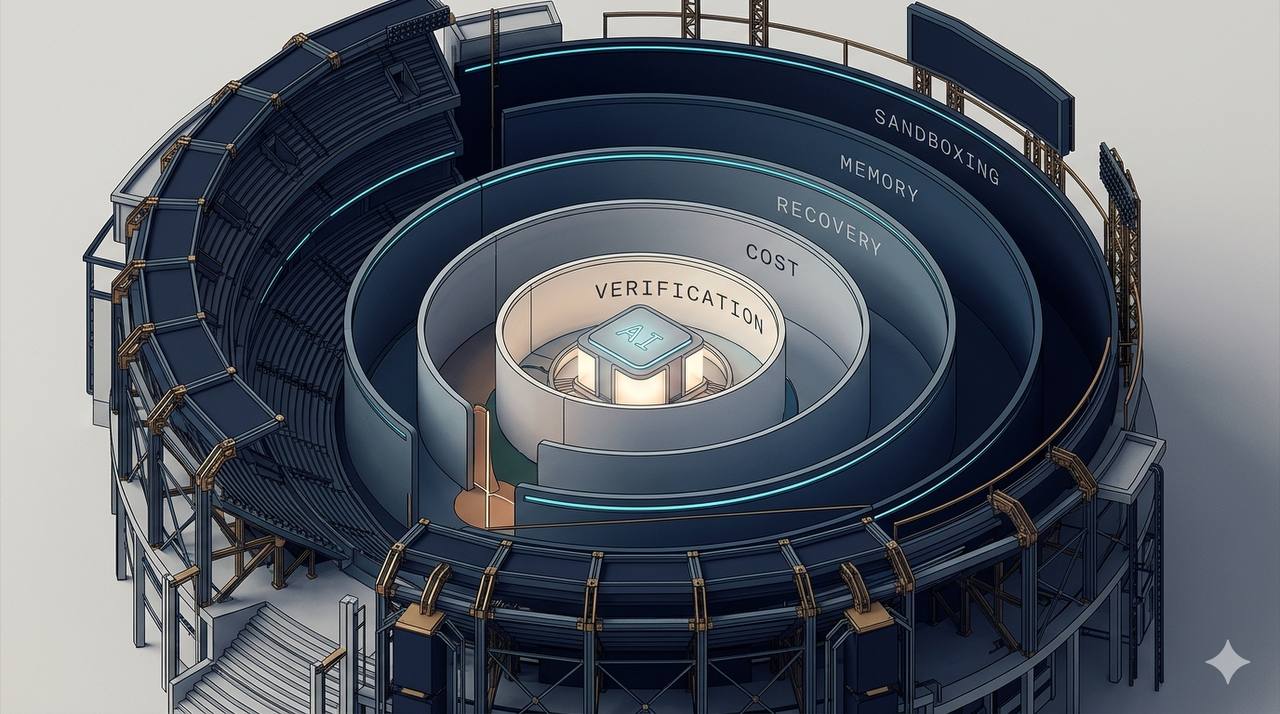

When I started, I had a single agent and a few tools, and I thought that was the whole job. It wasn't. Eighteen months later, the assistant is a CrewAI-based system with twelve domain specialists, a custom router, deterministic state pre-injection, an output verifier, a watchdog daemon, a learning loop, an output scrubber, an entity-id post-validator, and a slash-command surface that bypasses the LLM entirely for deterministic work. None of these were in my original mental model. Each one came out of a specific failure I couldn't engineer my way around with prompt changes alone.

1. Specialist scope: when one agent stopped being able to do everything

For a while I had a single specialist called Cody — utility infielder, the kind of player a manager pencils in everywhere because he can sort of cover everywhere — equipped with shell access, workspace read, workspace write, a Python REPL, and several other tools. Cody was a Swiss Army knife. Cody was also the source of about thirty percent of my reliability incidents, which is the agent equivalent of a .230 batting average: not bad enough to bench, just bad enough that you keep wishing it were better. The pattern was always the same: a complex task would chain four or five tool calls, the model would get confused about which tool's output was relevant to the next decision, and somewhere in the chain it would either loop on the same wrong approach or write a tool call with the wrong arguments and then spend half a context window trying to recover.

The fix wasn't prompt engineering. The fix was structural. I split Cody into four specialists, each with one tool and a narrow purpose: Filer reads and writes the workspace; Rider writes Obsidian vault notes; Scribe reads vault notes; Shell runs allow-listed commands. Each one a position player with one job — first baseman, catcher, closer — instead of one guy trying to play every inning at every position. Routing decisions about which one to use happen one level up, in the supervisor (Brock himself, the manager calling shots from the dugout). Each specialist's prompt got shorter, because it didn't need to disambiguate among capabilities it didn't have. Reliability on multi-step tasks jumped from roughly seventy percent to roughly ninety-five percent in the weeks after the split.

The lesson, in the most concrete form I can give it: the more tools an agent has, the worse it gets at choosing among them. This is the opposite of what demo aesthetics teach. Demo aesthetics love a single agent that "can do anything." Production wants a manager who knows which arm to call from the bullpen, not a starter who insists on pitching all nine innings.

2. Pre-fetched state: replacing tool calls with ground-truth blocks

A few months after the specialist split, I noticed the remaining unreliable specialists had a different problem. They weren't confused about which tool to call — they only had one — but their answers got hedgy and inconsistent on questions like "how is my paper-trading portfolio doing." The same query would produce different numbers from one invocation to the next, and the model would frequently include phrases like "approximately" and "roughly" for values I had stored to the cent.

I traced this to the chain-of-tool-calls structure: the trading specialist would call get_portfolio, get back JSON, call get_positions, get back more JSON, call get_drawdown_state, get back more JSON, and then try to compose an answer from three different blobs of structured data. By the time the answer was being composed, the prompt was two-thirds JSON tool output, the model was working with a noisy context, and it was hedging because hedging is what models do when they're not confident which value to cite.

The fix that broke this pattern is what I call DSPI — Deterministic State Pre-Injection. Before the LLM is invoked at all, the harness pre-fetches everything the specialist might need (account snapshot, open positions, today's trades, drawdown state, derived metrics like net unrealized P&L and percentage-of-buying-power deployed) and injects all of it into the prompt as a single, well-formatted ground-truth block. The block is labeled "GROUND TRUTH — pre-fetched at <timestamp>; do NOT call tools to re-derive." The specialist's only job is to read the block and answer the question.

The most extreme version of this: my paper-trading and ledger specialists have zero tools. They cannot make any tool call. They cannot fetch anything. They are pure synthesis layers. The DSPI block contains the entire account snapshot plus pre-computed derived metrics calculated by Python before the model is called. The specialist's job is to read the question, find the matching number in the block, and phrase the response. Reliability on these two specialists is roughly ninety-nine percent, because there's nothing for the model to get wrong — the math is correct by construction, the data is fresh by construction, and the linguistic last mile is something LLMs are actually good at.

The principle that fell out: if the answer is fetchable, fetch it deterministically and inject it as ground truth. Tools are for actions. Data is for prompts. A lot of "agentic" patterns I see in tutorials are actually data-fetching patterns dressed up as tool use, and they pay an unnecessary reliability tax for the costume.

3. A second model that runs when the first one flakes

The mistral-small3.2 model I run for most specialists has a specific failure mode: about five to ten percent of the time, on long prompts with multiple sections, it returns an empty content response. Not an error. Not a refusal. An actual empty string — the equivalent of your starter walking the bases loaded and then just... staring at home plate. CrewAI then proceeds as if the model said nothing, the user gets a blank reply, and the conversation memory records a turn with no content.

I tried various prompt fixes; none worked reliably. The flake rate was apparently inherent to the model under those conditions. So instead of trying to fix the flake, I made the flake recoverable: when the trading or ledger specialist returns empty content, the harness automatically retries with qwen3.6:27b — a different local model with a different architecture, running on the same Ollama server. Manager goes to the bullpen, calls in a left-hander against a right-handed lineup. Different architectures have different failure modes; running the same input through both gives me reliability diversity for free.

The temptation when I first hit this was to use a cloud model as the fallback, because cloud models flake less often. I almost did. The reason I didn't is the same reason I'm not on a managed platform in the first place: the data leaving the home is the entire reason the home setup exists. A "reliability" cloud fallback that quietly leaks Stockton position data is just data leakage with a different label. So the rule, written down where I'll see it the next time I'm tempted: routine specialists are local, always. Reliability fallbacks for those specialists are a different local model, never a cloud one. The single carve-out is a planned debug specialist for ops root-cause analysis, where the data is log lines and code (not financial state) and the volume is low; that one can be cloud, and is being treated as a deliberate, documented exception rather than the start of a slippery slope.

4. A watchdog that watches things the agent can't notice

LaunchAgent KeepAlive on macOS will restart a process that crashed. It will not restart a process that is alive but hung, blocked on a slow HTTP call, or wedged in an inference loop the way Ollama occasionally gets when fed an unfortunate prompt sequence. My agent's reliability was better than the underlying service uptime suggested it should be — but only up to the point where Ollama on the GPU box started returning 30-second timeouts every few minutes for an hour straight. KeepAlive saw a healthy process. The agent saw a wall of timeout errors and started trying to recover from inside its own context. That's not where recovery should happen.

The fix lives outside the agent: a separate daemon, wedge_detector, probes each long-running service's health endpoint every thirty seconds with a five-second timeout. After three consecutive failures it kicks the service with launchctl kickstart -k, then sends a Telegram alert. This is the watchdog that watches the watchdog: it catches alive-but-hung processes that the OS-level supervisor can't see.

A second daemon — brock_watchdog.sh, the same one that prompted this post — checks LaunchAgent plist drift. Property list files on macOS are configuration; if one drifts (a bad update script, a manual edit, a corrupted write), the agent's environment has changed under its feet. The watchdog hashes every plist against a canonical snapshot, alerts on divergence, and auto-restores from canonical if the live file fails plutil -lint validation or is implausibly small. This caught two real incidents in the past quarter — one homebrew update truncated a plist, and one stale install script overwrote a customized RunAtLoad=false setting that I had specifically set after another incident.

The lesson: a production agent isn't an agent that doesn't fail. It's an agent that fails, has something outside it that notices the failure, and has a recovery primitive that runs without the agent's involvement. Pitcher walks the leadoff hitter? You don't ask the pitcher whether he's still got it. You watch from the dugout, and somebody else makes the call. If the failure detection lives inside the agent's own loop, the agent is also the thing failing, and you can't trust it to notice.

5. A separate model that verifies — and why I added it the day after the plist incident

This brings us back to the plist drift hallucination at the top of this post.

I built two things in the days after that incident. The first was the obvious one: I extended the RAG ingest pipeline with a new subject called brock_internals, and pointed it at ~/.brock/watchdog/, ~/.brock/scripts/, and ~/.brock/src/brock/. I wrote a tool called WatchdogStateTool that pre-fetches the last fifty lines of the alert log, the LaunchAgent inventory, recent /notify activity, and the live drift status, and emits all of it as a DSPI ground-truth block. I wired the supervisor to recognize ops-shaped queries and route them to specialists with this DSPI active. After this fix, the same plist drift question got an answer that correctly cited my actual watchdog code. Good.

But the more important thing I built was the second thing: an output verifier. A separate, small model (the same gemma4:e4b that runs my router — local, fast, already loaded) that reviews each specialist's response before it ships to me. It asks three questions: does the response cite specific facts that are in the ground-truth block or attributed via citation tags; does it actually answer the user's question or did it riff on a related-but-different topic; does it use hedging-confabulation phrases ("very likely related to," "this is a common issue," "the root cause is generally") without specific cited evidence. The verifier returns PASS or FAIL with a one-sentence reason. On FAIL, the harness re-prompts the same specialist with stricter instructions, and if that still fails, it returns a hedged refusal — "I don't have grounded data to answer this, please rephrase or escalate" — which is a much better outcome than a confidently wrong answer.

The reason this matters more than the plist-specific fix: I'll never have ground truth for every novel question shape. I can keep building DSPI tools forever and the model will still occasionally find a question I didn't anticipate, and it will still confabulate when it does. The verifier doesn't fix the confabulation; it catches it post-hoc and prevents shipping. The structural rule is: never let the producing agent verify its own output. You don't ask the batter to call his own balls and strikes. Always a separate model, or deterministic Python code (which is how my entity-id post-validator works — it rejects any response that contains a Home Assistant entity_id not present in the ground-truth block or in a known-aliases dictionary).

6. A slash-command surface that doesn't use the LLM at all

Some questions don't need an LLM. "What's the current TOU rate window?" has one correct answer, and it's a function of the wall clock and a config file. "What was the score of the Cardinals game?" has one correct answer, and it's a JSON response from MLB Stats API. (Yes, I check it constantly. No, I won't apologize.) "How much have I deployed in paper-trading positions?" has one correct answer, and it's a sum operation on a SQLite table.

For all of these, I built deterministic slash commands. /grid, /cardinals, /portfolio. They run as Python handlers in the Telegram bridge. The LLM is not invoked. The user types the slash command, the handler does its job, the response goes back. There is no reasoning step, no tool selection, no chance of confabulation, no token cost. It's the bunt single of the system architecture — unglamorous, reliable, gets you on base.

This is the layer of the system that's most boring and most reliable. It's the layer that handles maybe forty percent of my actual usage. And it took me longer than it should have to build, because for a long time I was trying to make the LLM handle these questions "elegantly" via tool calls, and the LLM was reliably mediocre at that compared to a forty-line Python function with a regex.

The principle I'd give to someone starting: not every question needs an agent. If the answer is deterministic, write a function. Save the agent for questions where reasoning genuinely happens.

What stayed, what changed, what I'd do differently

A few things have stayed constant since the start. I'm still running on local hardware with a hard rule against routine cloud LLM use. I'm still on Telegram as my primary surface, supplemented by a web chat for longer sessions and a dashboard for read-only views. I'm still using CrewAI as the agent framework (though with my own supervisor, custom routing, and most of the orchestration removed). I'm still using Obsidian as the source of truth for documentation, which a daily ingest job pulls into a Chroma vector store on the GPU host.

A few things changed two or three times. The supervisor topology evolved from a CrewAI hierarchical mode (which spiraled into agent-to-agent delegation chains of fifteen-plus calls per chat, and was where most of my early latency complaints came from) through a Brock-Lite cloud-frontier router (which worked but contradicted the local-only commitment) to the current setup, where a small local model handles routing and a local-only specialist handles the actual answer. The model assignment for specialists got swapped twice — first from cloud kimi-k2.6 to local mistral-small3.2 once mistral was passing my regression suite, then from mistral as the only model to mistral-with-qwen-fallback once I had data on the empty-content flake rate.

The thing I'd most do differently: I would build the watchdog and the output verifier on day one, before I had specialists, before I had RAG, before I had any of the rest. I built them after specific failure incidents told me I needed them. Each incident was preventable in retrospect. The cost of building them up front is roughly two days of work; the cost of not having them when you hit the incident is, in my experience, roughly two weeks of fragile production behavior plus a bunch of small lost trust with the people the agent is supposed to be helping.

What it does for me now

This is the part that, to me, justifies the eighteen months.

At ten p.m. on a typical evening, Brock looks at the next day's PV forecast (pulled from my WeatherFlow station via Home Assistant), checks the current battery state of charge, calculates whether tomorrow's solar will fully cover the day's anticipated load, and makes a binary decision: do we charge the batteries from grid during the cheap super-off-peak window, or do we coast on what's already in the bank. The decision is encoded as a Modbus write to one of the four allow-listed registers. I get a Telegram message with the rationale.

In the morning, around six-thirty, Brock assembles a briefing: yesterday's energy production and grid import, last night's charging decision and outcome, weather summary for the day, the paper-trading queue's planned moves at market open, any pending Brock learning-loop fixes I need to approve, and the current state of any in-flight conversations from the previous evening. The briefing arrives in my inbox before I'm up.

Throughout the day, slash commands handle the small operational asks — /grid for current TOU window and rate, /cardinals for last night's game, /portfolio for positions, /blocked for any paper trades that the strategy gates declined to submit. The longer interactive questions — "why did Augustus stop charging at 4 a.m.," "what's the divergence between my projected May bill and HA's running total," "draft a reply to this email" — go through the LLM specialists with full DSPI context and verification.

Brock occasionally still gets things wrong. Even the best hitters are out two-thirds of the time; an agent that's right most of the time is doing the job, as long as the wrongness gets caught before it ships. The verifier catches a meaningful fraction of that wrongness on the way to home plate. The learning loop captures what gets through, classifies the misses overnight, and proposes fixes I review the next morning — the post-game film session, basically. Over time the surface area of what fails has shrunk; what's left is the long tail of novel question shapes I haven't designed for yet.

What I'd tell someone starting

Build the watchdog before you need it. Pre-fetch ground truth instead of asking the model to call tools. Split wide tool surfaces into narrow specialists. Add a separate verifier model before you ship anything that matters. Use slash commands and Python functions for deterministic work; save the LLM for questions where reasoning actually has to happen. Stay local for sensitive data; carve cloud out as deliberate exceptions, never as defaults.

And don't underestimate how much of the work is mundane infrastructure — file syncs, log tails, plist hashes, retry loops, restart scripts, alert routing. The agent is the visible part — the guy on the mound. The infrastructure underneath it is the dugout, the bullpen, the trainers, the scouting reports, the groundskeeper who chalks the lines before every game. That layer is probably eighty percent of what I've actually built. Brock is twelve specialists, and none of them think they can do everything. That is the most important thing I learned, expressed as a sentence about the system, but the thing under that sentence is that I had to learn it about the model first. The model can't do everything. Stop asking it to. Build the scaffolding that lets it do the parts it's good at, and let everything else be code.

That's eighteen months in one paragraph. The rest of the time has been figuring out which decisions belong where, which is its own kind of long season — 162 games, no shortcuts, and a lot of plays that don't show up in the box score.