I have a friend who's been waiting eight months to ship his AI agent. The reason changes — first he was waiting for GPT-5, then Claude Opus 4.6, then Gemini Ultra, now whatever's coming next. Each release, he runs his benchmark, sees a small improvement, and decides the system still isn't ready. He keeps telling me the agent is "almost there." It's been almost there since September.

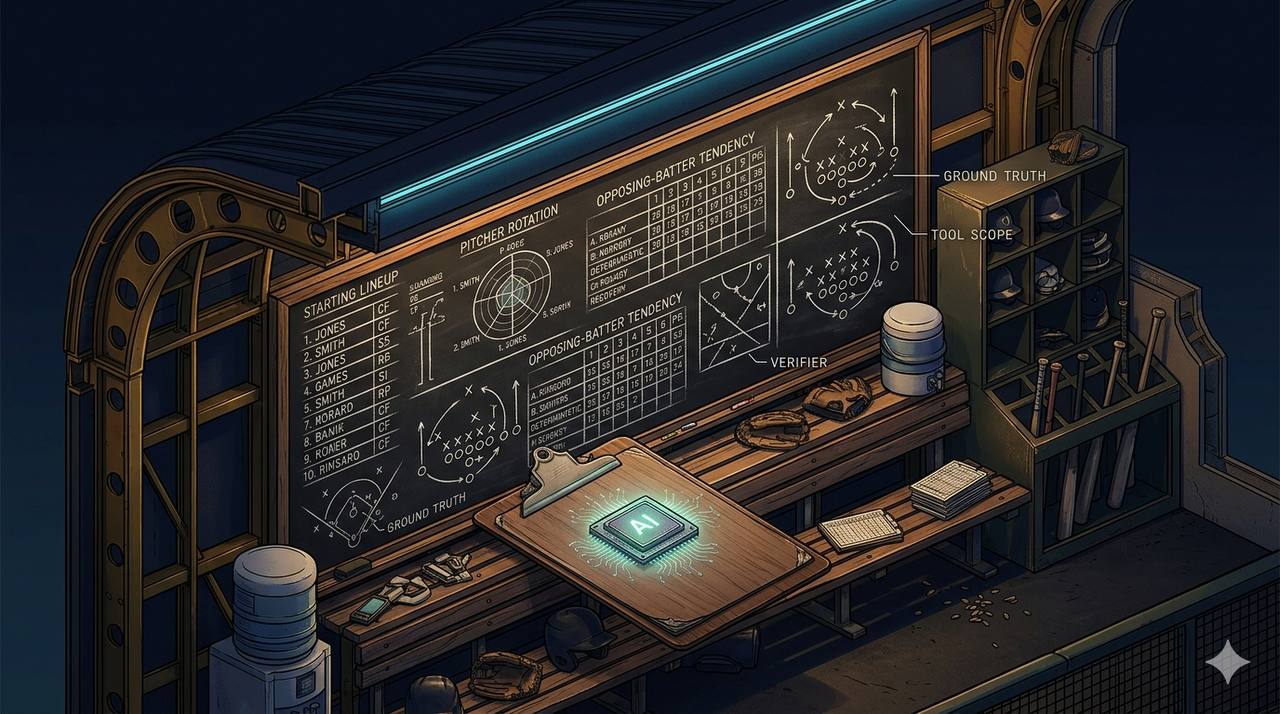

I've watched his agent run. The agent's problem is not the model. The agent's problem is that it has fifteen tools, no ground-truth pre-fetched anywhere, no idea which tool is supposed to handle which question, and a five-thousand-token system prompt that tries to disambiguate by sheer verbosity. He could run that agent on Claude Opus 5 in 2027 and it would still fail in roughly the same places. Because the model isn't his bottleneck. His planning is.

This is the post I should have written before the one I posted yesterday about building Brock — my home-built CrewAI assistant — and the eighteen months of harness scaffolding that went into making it reliable. Yesterday's post was about what the scaffolding is. Today's is about the layer one level up: deciding what the scaffolding needs to be in the first place, before you write a line of code. That work — the planning — is most of what determines whether your agent works. And it's almost completely independent of the underlying model.

I want to make a strong claim, defensible from my own experience: swapping models is the wrong first move for almost every reliability problem in agent design. A better model masks bad planning for a while, then breaks under it the moment the workload gets harder. Better planning, with a worse model, often dramatically outperforms a better model with no planning. And worse: planning is cheaper, faster to iterate on, and doesn't have a shipping calendar.

Let me show you what I mean by "planning."

What planning actually means at the agent layer

When I say "plan your agent," I don't mean draw an architecture diagram in Miro. I don't mean write a project spec. I mean five concrete decisions you make on a whiteboard, before you call a single LLM API.

-

What state will the agent see when it tries to answer? Not what it can fetch — what it sees in the prompt at the moment it generates a response. This is the most important question and almost nobody asks it.

-

What tools is the agent allowed to call, and what's the blast radius of each one? Specifically not "what could it possibly need" but "what's the minimum it needs to do its job, and what's the worst that can happen if it picks the wrong one."

-

What questions can be answered deterministically without invoking the model at all? Asked another way: which forty percent of your "agentic" workload is actually a Python function with a regex.

-

Who or what verifies the response before it ships? The LLM that produced it doesn't count. That's not verification, that's confirmation.

-

What happens when the agent fails — and how does the system notice? Not "what does the agent do when it gets a tool error" — "what does the system around the agent do when the agent itself is the thing failing."

Each of these works at GPT-5 quality if you've planned for it. Each of these fails at GPT-5 quality if you haven't. The model is the constant, basically. The planning is the variable. And the variance is enormous.

Let me walk through each one with a concrete example from Brock. Not in the abstract — in the actual decisions that came up, what I planned wrong the first time, and what changed when I planned better.

1. Plan what state the agent has

When my paper-trading specialist gets a query like "how is the portfolio doing today," there are two ways to architect the answer.

The way most tutorials show: the specialist has tools called get_portfolio_summary, get_open_positions, get_daily_pnl, get_drawdown_state, and so on. The model sees the question, picks tools, calls them in sequence, parses the JSON outputs, composes an answer. This is what "agentic" looks like in 2026. It's also where my specialist used to hedge constantly, sometimes give different numbers from one invocation to the next, and occasionally just give up and say "I'm having trouble retrieving your portfolio data."

The way Brock actually does it: the harness pre-fetches the entire account snapshot — positions, today's trades, drawdown state, derived metrics computed in Python before the model is invoked — and injects all of it into the prompt as a single, well-formatted ground-truth block. The specialist has zero tools. It cannot fetch anything. Its only job is to read the block and answer the question.

The reliability difference is roughly thirty percentage points. And the model is the same model.

What changed is the planning answer to question one. In version A, "what state will the agent see when it tries to answer" is "whatever it manages to fetch through tool calls, in whatever order, with whatever parsing succeeds." In version B, the answer is "all of it, formatted, deterministic, every time." That's not a model question. That's a planning question. You can ask it on a whiteboard with no LLM in the room.

The same principle applies to anything where the answer is derivable: the agent shouldn't be deriving it. Tools are for actions. Data is for prompts. A lot of "agentic" patterns I see in the wild are data-fetching patterns dressed up as tool use, and they pay an unnecessary reliability tax for the costume. The frontier model doesn't help; it just hedges with better grammar.

2. Plan the tool surface

The temptation, when you give an agent tools, is to be generous. Give it shell access. Give it a Python REPL. Give it workspace read and write. Give it everything it might conceivably need.

I did this for the first six months of Brock. I had a single specialist called Cody who was equipped with shell, workspace read, workspace write, a REPL, and several other tools. Cody was the team's utility infielder — sort of capable everywhere, reliably mediocre at all of it. About thirty percent of complex tasks failed somewhere in the chain because the model couldn't decide which tool the next step needed.

The fix wasn't a better model. It was the opposite. I split Cody into four specialists, each with one tool: Filer reads and writes the workspace, Rider writes Obsidian vault notes, Scribe reads vault notes, Shell runs allow-listed commands. Routing decisions about which one to use moved one level up, into a supervisor who decides at chat time. Each specialist's prompt got dramatically shorter because it didn't need to disambiguate among capabilities it didn't have.

Reliability on multi-step tasks went from roughly seventy percent to roughly ninety-five percent. Same model. Same tools, redistributed. The difference was the planning answer to question two. In version A, the answer was "give Cody everything; the model will figure it out." In version B, the answer was "give each specialist exactly one thing; the supervisor figures it out."

There's a bigger principle hiding here that I think is one of the most underappreciated facts about LLMs in agent design: the more tools an agent has, the worse it gets at choosing among them. This is the opposite of what demo aesthetics teach — demos love a Swiss Army knife agent. But Swiss Army knives are bad at any one specific cut. Production wants a manager who knows which arm to call from the bullpen, not a starter who insists on pitching all nine innings while also catching, also playing third, also coaching the rotation.

A better model will be marginally better at picking among fifteen tools. A worse model with three tools will outperform it. Plan the tools narrow.

3. Plan what the LLM doesn't touch

This is the planning question that took me longest to internalize, and the one I think most agent builders skip entirely.

Some questions don't need an LLM. "What's the current Coastal Electric time-of-use window and rate?" has one correct answer, and it's a function of the wall clock and a config file. "What was the score of the Cardinals game last night?" has one correct answer, and it's a JSON response from MLB Stats API. "How much have I deployed in paper-trading positions?" has one correct answer, and it's a sum operation on a SQLite table.

For all of these, I built deterministic slash commands. /grid, /cardinals, /portfolio. They run as Python handlers in Brock's Telegram bridge. The LLM is never invoked. The user types the slash command, the handler does its job, the response goes back. There is no reasoning step, no tool selection, no chance of confabulation, no token cost.

This is the layer of Brock that's most boring and most reliable. It's also the layer that handles maybe forty percent of my actual usage. And it took me longer to build than it should have, because for a long time I was trying to make the LLM handle these questions "elegantly" via tool calls, and the LLM was reliably mediocre at that compared to a forty-line Python function.

The principle that fell out: not every question needs an agent. If the answer is deterministic, write a function. Save the agent for questions where reasoning genuinely happens — where you can't write the answer down ahead of time because the answer depends on some interaction between variables you haven't enumerated.

This planning decision — figuring out which workloads are deterministic and writing them as code — is one of the highest-ROI moves in agent design. And no model upgrade helps you here. Claude Opus 5 will still be slower, more expensive, and less reliable at returning the current TOU window than a four-line Python function. The question isn't which model you're using; it's whether the LLM should be in the loop at all.

4. Plan the verifier

I learned this one the hard way three days ago.

I asked Brock — through Telegram, where I usually do — about a warning I was getting from one of my watchdog scripts. Brock replied with a confident, well-formatted, plausible-sounding answer about how the warning was "very likely related to a common and serious data corruption issue" with a specific made-up cause that had absolutely nothing to do with the actual root cause. The model had pattern-matched on a couple of words in my question and confabulated a story that read like an answer.

I wrote about that incident yesterday. The fix had two parts. The first was building the ground-truth coverage for the topic — extending the RAG corpus, writing a tool that pre-fetches the watchdog's recent alert log, wiring it into the relevant specialists. That was important.

The more important fix, and the one relevant to today's argument, was building an output verifier. A separate small model (the same gemma4:e4b that runs my router — local, fast, already loaded) reviews each specialist's response before it ships to me. It asks three questions: does the response cite specific facts that are in the ground-truth block; does it actually answer the user's question; does it use hedging-confabulation phrases without specific cited evidence. The verifier returns PASS or FAIL with a one-sentence reason. On FAIL, the harness re-prompts the same specialist with stricter instructions, and if that still fails, it returns a hedged refusal.

Here's the part that matters for today's argument. After I built that verifier, I tested it against the same hallucination using a frontier-class model. Same query, no DSPI, no RAG, just a frontier model fielding the question cold. The frontier model also confabulated. Different specific story, same shape — a confidently-presented answer pattern-matched from the keywords. The verifier caught it the same way. With DSPI and ground truth in place, the local model got it right. Without verification, both models shipped wrong answers.

This is the cleanest example I have of "the model isn't the variable." The hallucination rate doesn't go to zero with a better model. It goes down some, sure. But the dominant failure mode — confidently wrong answers when the model lacks ground truth and nothing checks the output — is structural, not model-class. And the fix is structural. A separate model verifies. Always. Never the producing agent. That's a planning decision you make before you choose your model.

You don't ask the batter to call his own balls and strikes. The umpire is a separate role.

5. Plan the recovery

The fifth question — what does the system do when the agent fails — is the one most agent projects don't even know they need until something fails badly. Then they realize the agent has been failing all along, just quietly.

LaunchAgent KeepAlive on macOS, where Brock runs, will restart a process that crashed. It will not restart a process that is alive but hung — blocked on a slow HTTP call, looped on a wrong path, wedged in an inference timeout. My agent's reliability looked better than the underlying service uptime should have permitted, until the day Ollama on the GPU box started returning 30-second timeouts every few minutes for an hour straight. KeepAlive saw a healthy process. The agent saw a wall of timeout errors and tried to recover from inside its own context. That's not where recovery should live.

The fix lives outside the agent: a separate daemon that probes service health every thirty seconds, and after three consecutive failures, kicks the service. A watchdog that watches the watchdog. There's a second one that does SHA-256 drift detection on my LaunchAgent configuration files, in case something rewrites them under my feet. There's a third that captures hedged responses overnight, classifies them, and proposes fixes I review the next morning.

None of these required a better model. They required planning the answer to question five before the failures happened. The planning question is: when this agent fails — and it will — what notices, and what recovers? If the answer is "the agent itself," your system isn't built yet. The agent is the thing failing; you can't trust it to notice.

Pitcher walks the leadoff hitter? You don't ask the pitcher whether he still has his stuff. You watch from the dugout, and somebody else makes the call.

When does a better model help?

I want to be honest about the carve-out, because I've been arguing strongly in one direction.

There is a class of agent work where a better model genuinely helps. Open-ended reasoning across long contexts. Non-trivial code synthesis where the structure isn't fixed. Cross-document analysis that requires synthesis the planning can't pre-do. Some kinds of creative writing. Some kinds of pattern recognition. For these, a frontier model genuinely outperforms a smaller one, and no amount of planning closes the gap.

I have one specialist in Brock that's planned to use a frontier cloud model — deepseek-v4-pro:cloud via Ollama Cloud, the only cloud carve-out in an otherwise all-local system. It's the debug specialist, for ops root-cause analysis on novel failure shapes I haven't built ground truth for yet. The job requires reasoning across log files, code, configuration, and recent system state to figure out why something is breaking. That work is genuinely model-bound. Plan as I might, I can't pre-fetch the right answer, because the right answer is the one nobody knows yet.

The honest test for whether you need a better model is this: would planning fix this if I had infinite time? If the answer is yes, plan. The model is not your bottleneck; the planning is. If the answer is no — if the work is genuinely open-ended reasoning where structure isn't pre-known — then you have a model question.

In my experience, this happens to maybe ten percent of agent workloads. The other ninety percent are planning problems wearing model-question costumes.

The economic angle

There's one more reason I push back on "wait for the next model." Frontier models are expensive, both per call and per surprise bill. The team I know of that ran an agent loop overnight and woke up to a $4,000 cloud spend was using the most expensive model they had access to. The frontier model didn't save them; the lack of cost guards bankrupted them.

Better planning gets cheaper as you go. Better models get more expensive. The economics compound differently. If your planning is right, you can run on a smaller, cheaper model — locally, even — and pay nearly nothing per call. Your wins compound: fewer failures, fewer retries, fewer cloud-shaped surprises, and eventually a system that runs in your house on hardware you already own.

I'm running Brock across two pieces of hardware in my home — a Mac mini M4 Pro that hosts the supervisor and a few of the lighter specialists, and an NVIDIA DGX Spark that runs Ollama for the heavier inference. mistral-small3.2 is the routine specialist model, with qwen3.6:27b on the same hardware as a different-architecture reliability fallback. The total marginal cost of an answer is roughly the electricity to run inference for two seconds. Frontier-shaped answers, frontier-class reliability — at homelab cost.

That outcome required eighteen months of planning, not eighteen months of waiting for better models.

What to do tomorrow

If you're building an agent and it's not as reliable as you want it, before you upgrade the model, sit down with your whiteboard and answer the five questions:

- What state does my agent see at the moment it answers? (If "whatever it fetches via tools," fix that first. Pre-fetch ground truth.)

- What's the blast radius of each tool I gave it? (If "everything," split into specialists. Each one tool, narrow scope.)

- What questions can I answer without invoking the LLM at all? (Probably forty percent of your workload. Write the function.)

- Who verifies the output before it ships? (If "nobody," build that. Separate model, never the producer.)

- What happens when the agent fails? (If "we hope it doesn't," build a watchdog. Outside the agent.)

Each of these works at the model class you're already using. Each of these breaks at the model class you're hoping for. Plan them, and your agent gets dramatically better. Don't, and the next model release won't save you.

The next big-name model will arrive on someone's calendar in the next six months. Yours is shipping today, on the model you have, if you've done the planning. That's the option I want you to consider: not better LLM, better planning.

Better players don't beat better managers, mostly. The managers know which pitcher to call.